Support &

Documentation

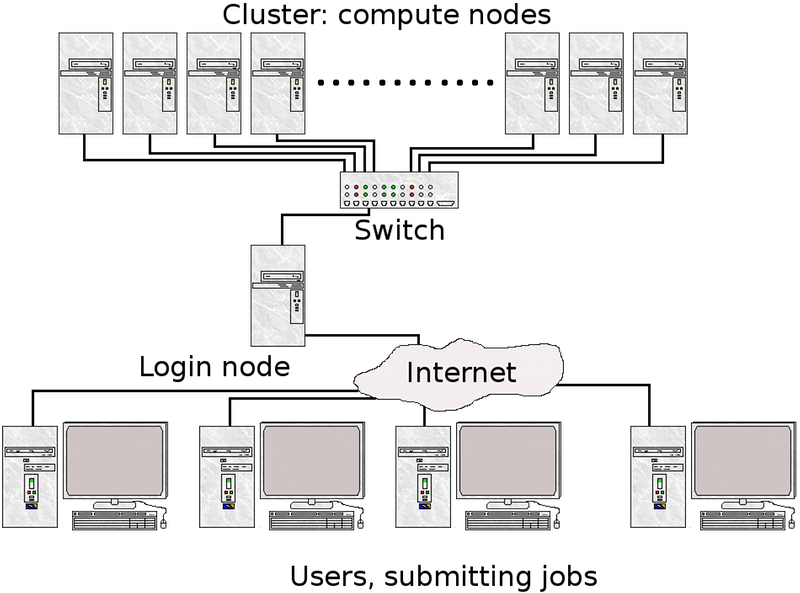

A computer cluster consists of a number of computers (few or many), linked together and working closely together. In many ways, the computer cluster works as a single computer. Generally, the component-computers are connected to each other through fast local area networks (LANs).

The advantage of computer clusters over single computers, are that they usually improves the performance (and availability) greatly, while still being cheaper than single computers of comparable speed and size.

A node is the name usually used for one unit (usually one computer) in a computer cluster. Generally, this computer will have one or two CPUs, each normally with more than one core. The memory is always shared between cores on the same CPU, but generally not between the CPUs. This means programs using only multi-threaded, shared memory programming interfaces, like OpenMP, is not generally suited for clusters, unless there are many cores per CPU and much memory per CPU.

Normally, clusters have some sort of batch or queueing system to handle the jobs, as well as a communication network.

With few exceptions, all computer clusters are running Linux.

A supercomputer is simply a computer with a processing capacity (generally calculation speed) high enough to be considered at the forefront of today. For many years, this has been single computers with many CPUs and usually shared memory - sometimes built specifically for a certain task. They have often been custom-built machines, like Cray, and still sometimes is. However, as desktop computers have become cheaper, most supercomputers today are made up of many "off the shelf" ordinary computers, connected in parallel.

So, a supercomputer is not the same as a computer cluster, though a computer cluster is often a supercomputer.

In general, jobs are run with a batch- or queueing system. There are several variants of these, with the most common working by the user logging into a "login node" and then putting together and submitting their jobs from there.

In order to start the job, a "job script" will usually be utilised. In short, it is a list of commands to the batch system, telling it things like: how many nodes to use, how many processors, how much memory, how long to run, program name, any input data, etc. When the job has finished running, it will have produced a number of files, like output data, perhaps error messages, etc.

Since the jobs are queued in the system and will run when they are able to get a free spot, it is not generally a good idea to run programs that require any kind of user interaction, like som graphical programs.

Computer clusters are built up of many connected nodes, with a limited number of cores and memory each. In order to get a speed-up, the problem must be parallelliseable.

This can be done in several ways. A simple way is to just divide it into smaller parts, and then run the program many times for variations of parameters on your input data. The tasks are each serial programs, running on one node each, but many instances are running at the same time. Another option is to use a more complex parallelisation that requires changes done to the program, in order to allow it to be split between several processors/nodes, that communicate over the network. This is generally done with MPI or similar.

Tasks that can be split up into many serial jobs will run faster on a computer cluster.

No special programming is needed, but you can only run on one node for each task. It is good for long-running single-threaded jobs.

A job scheduler is used to control the flow of tasks. Using a small script, many instances of the same task (for instance a program, each time run with slightly different parameters) can be set up. The tasks will be put in a job queue, and will run as free spaces open up in the queue. Normally, the tasks will run many at a time, since they are serial and so each only use one node.

Example:

You send out 500 tasks (say, run a small program for 500 different temperatures). There are 50 cores on the machine, that you can access. 50 instances are started and then run, while the remaining 450 tasks wait. When the running programs finishes, the next 50 will start, and so on. This means it is as if you ran on 50 computers instead of one, and you will finish in a fiftieth the time. Of course, this is an ideal example. In reality there may be overhead, waiting time between batches of jobs starting, etc. meaning the speed-up is not as great, but it will run faster.

In order to take advantage of more than one node per job and get speed-up this way, you need to do some extra programming. In return, the job can run across several processors that communicate over the local network.

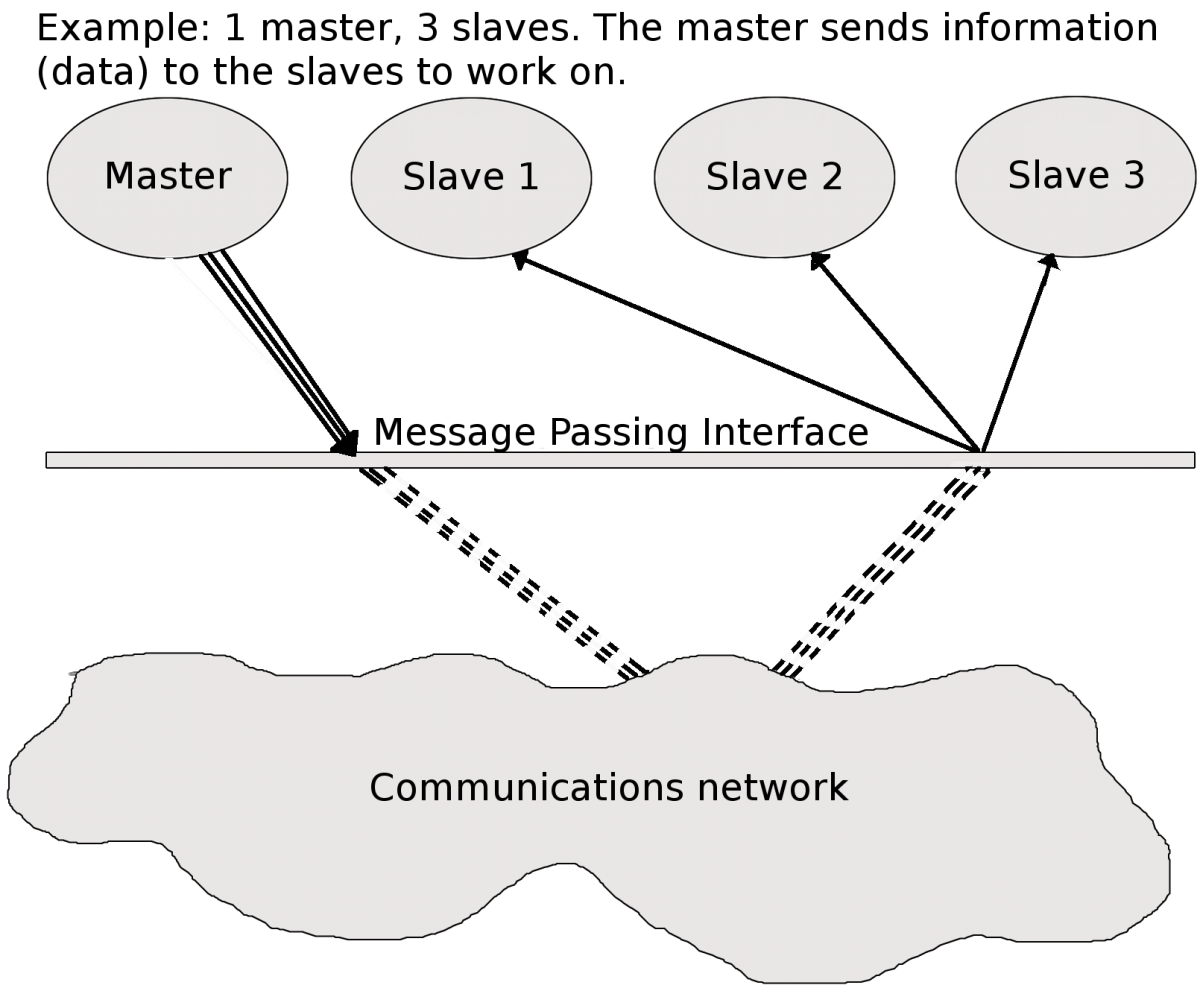

MPI is a language-independent communications protocol used to program parallel computers. There are several MPI implementations, but most of them consists of a set of routines that are callable from Fortran, C, and C++, as well as any language that can interface with their libraries. MPI is very portable and it is generally optimized for the hardware it runs on, so will be reasonably fast.

Programs that are parallelizable, should be reasonably easy to convert to MPI programs by adding MPI routines to it.

In order for a problem to be parallelizable, it must be possible to split it into smaller sections that can be solved independently of each other and then combined.

What happens in a parallel program is generally this: There is a 'master' processes that controls splitting out the data etc. and communicate it to one or more 'slave' processes that does the calculations. The 'slave' processes then sends the results back to the 'master'. It combines them and/or perhaps sends out further subsections of the problem to be solved.

Examples of parallel problems:

Shared memory is memory that can be accessed by several programs at the same time, enabling them to communicate quickly and avoid redundant copies. Generally, shared memory refers to a block of RAM accessible by several cores in a multi-core system. Computers with large amounts of shared memory, and many cores per node are well suited for threaded programs, using OpenMP and similar.

Computer clusters built up of many off-the-shelf computers usually have a smaller amount of shared memory and fewer cores per node than custom built single supercomputers. This means they are more suited for programs using MPI than OpenMP. However, the number of cores per node is going up and at least quad-core chips are common. This means combining MPI and OpenMP may be advantageous.

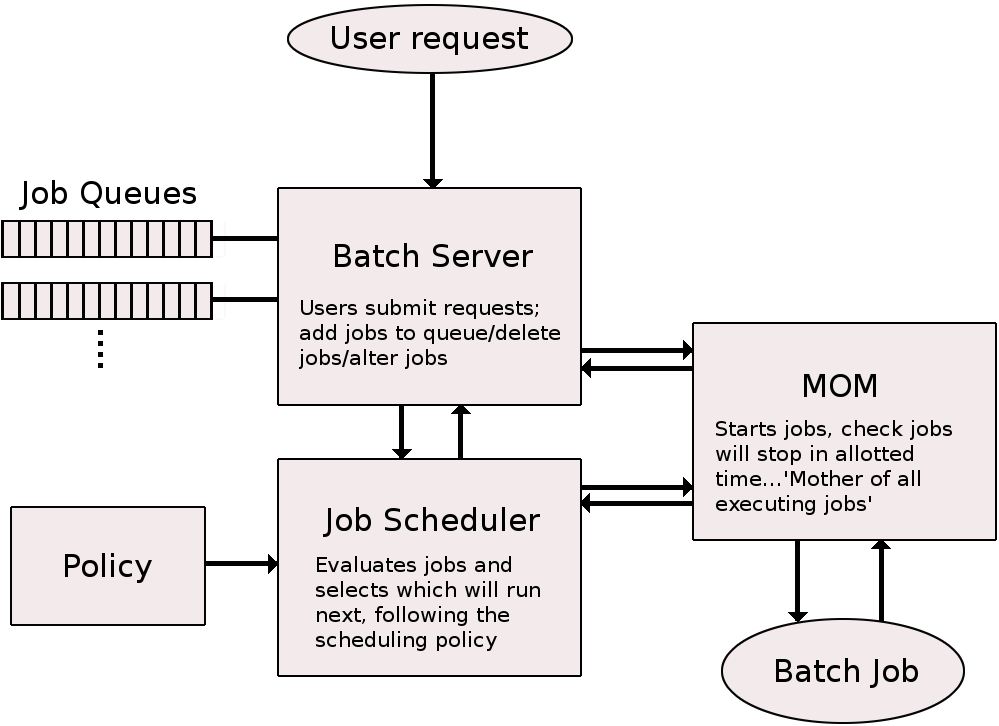

Once a parallel program has been successfully compiled it can be run on multi-processor/multi-core computing nodes directly or, in production environment, by means of a batch system. Batch system keeps track of available system resources and takes care of scheduling jobs of multiple users running their tasks simultaneously. It typically organizes submitted jobs into a three-part priority queue (running, idle, blocked). The batch system is also used to enforce local system resource usage and job scheduling policies.

In order to submit a job to the batch system, you generally use a job script. This is a shorter or longer list of commands, both to the batch system itself (number of nodes/processors to use, memory requirements, etc.), and commands that would otherwise be entered at the command line, like changing directories. When the job has completed, the output can be accesses. Likewise, any errors that happened will be logged in an error-file, which may be studied afterwards.

Directives to the SLURM batch system is preceeded with #SBATCH. Comments are preceeded by #.

Note that the project ID MUST be formated as SNICXXX-YY-ZZ, NAISSXXXX-YY-ZZ, or hpc2nXXXX-YYY (spaces and slashes are not allowed in the project ID. The letters can be upper or lower case though). Explanations of the most common SLURM directives can be found in our "Short Quick-start Guide".

Small script example

#!/bin/bash # Project to run under - change to your actual local/SNIC/NAISS id project number #SBATCH -A hpc2nXXXX-YYY # The project id can look like SNICXXX-YY-ZZ, NAISSXXXX-YY-ZZ, or hpc2nXXXX-YYY # Name of the job (makes easier to find in the status lists) #SBATCH -J Parallel # name of the output file #SBATCH --ooutput=test.out # name of the error file #SBATCH --error=test.err # asking for 4 cores #SBATCH -n 4 # the job can use up to 30 minutes to run #SBATCH --time=00:30:00 # load any modules you need. In this example, GCC compilers with OpenMPI # this should be changed according to which modules/programs are needed ml GCC/5.4.0-2.26 ml OpenMPI/2.0.2 # run the program - start parallel programs with srun srun ./my_parallel_program

More examples can be found here.

Jobs are submitted with the command qsub. If we assume your jobscript is called my_jobscript, the submission is done like this:

sbatch my_jobscript

There are other options, like giving the commands for number of nodes, memory, walltime, etc. on the command line, or running an interactive job, which is mostly done for debugging purposes or during development.

You can find more information about job submission here.

It may take a while before a submitted job is allowed to run (see policies), it may run for a long time, or it may not run at all because there are not sufficient resources available for what you have asked for. Therefore it is a good idea to check the status of a job. There are a number of commands for doing this:

More information can be found here.

To stop the job before it finishes:

scancel <jobid>

You get the job id from the squeue -u <username> command. The job id is also shown just after the job starts.

HPC2N has several computer clusters. For more detailed information, look at the HPC2N hardware pages.

Information about applying for a user account at HPC2N can be found here. You need a user account if you have a project at HPC2N. Accounts can be renewed the same place.

In order to apply for a new project, see here.

Ay HPC2N, the home directories are backed up regularly. The home directory is small (by default 25GB), and batch jobs should generally not be submitted from your home directory or any subdirectory of it. You should run all batch jobs from your project storage (/proj/nobackup/<your-storage-dir>). Likewise, your executables as well as all your data should be located in your storage project. You should only use your home directory for things that cannot easily be recreated, like configuration files, your own (or your groups) code, etc. You can read more about the file systems here.

Kebnekaise at HPC2N run SLURM as batch scheduling system. It has a system resource manager (allocates and enforces limits on nodes, processors, memory, etc.), and a job scheduler (handles job scheduling policies). The jobs are scheduled according to a set of policy rules and priorities which gives the user access as fairly as possible with respect to allotted resources.